2026 has just begun. If you are reading this, chances are you already know that data engineering is one of the most future-proof careers in tech. But beyond the hype, what does the market actually look like this year?

Let’s talk reality.

Across global markets, data engineers continue to command strong salaries. In India, compensation scales sharply with experience, with senior engineers in top-tier companies crossing premium brackets. In the United States, senior data engineers are commanding compensation ranges between $147,000 and $183,000, with elite companies offering significantly more for high-impact roles.

But here is the catch.

The interview bar has never been higher.

With the rise of AI-assisted development, workflow automation tools, and managed cloud platforms, companies are no longer looking for someone who can simply write scripts. They want engineers who can architect, optimize, and scale data systems. They want people who understand distributed systems, real-time pipelines, and business impact — not just syntax.

If you are:

- A student preparing for placements

- An analyst trying to pivot into engineering

- A backend developer transitioning into data

- Or an experienced engineer overwhelmed by tools like Airflow, Kafka, Spark, dbt, Kubernetes, and more

You are not alone.

This guide cuts through the noise.

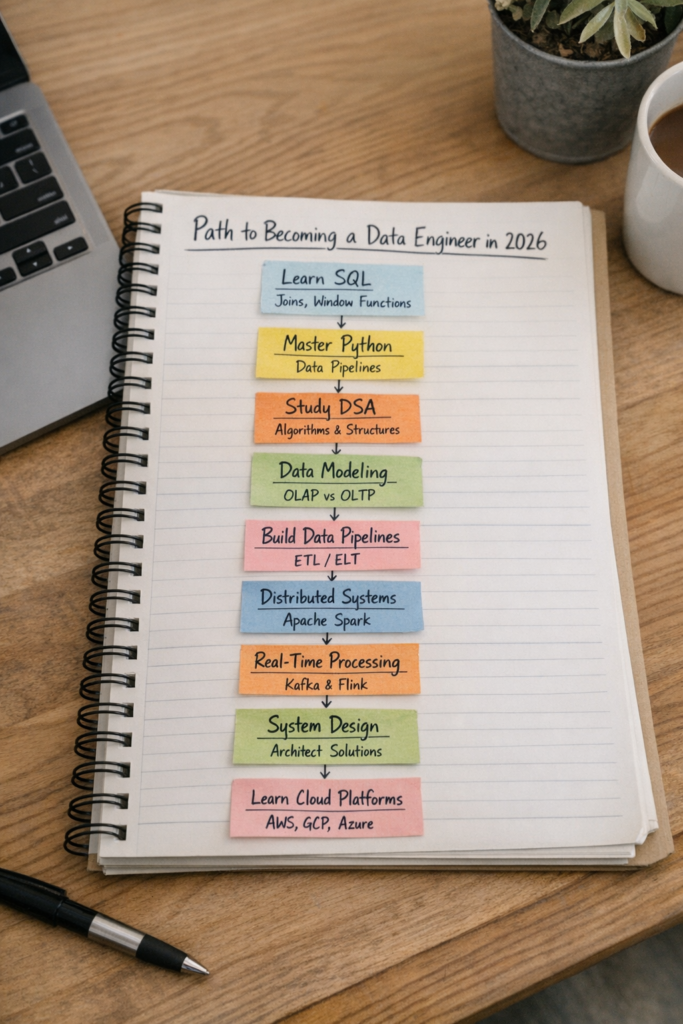

Below are the 10 essential skills you need to master to become a successful data engineer in 2026, broken down clearly for freshers and experienced engineers. Every resource mentioned is free, and the focus is on fundamentals that will remain valuable regardless of tooling trends.

Let’s dive in.

Skill #1: SQL — The Undisputed King

If you remember only one thing from this article, let it be this:

SQL is king.

It does not matter how strong you are in Python or DSA. If you cannot manipulate data efficiently in a relational database, you will struggle in data engineering interviews.

Why SQL Matters More Than Ever in 2026

Despite all advancements in AI and automation, structured data still lives in relational systems. Warehouses, transactional systems, analytics engines — they all rely heavily on SQL.

In interviews, you are typically given:

- An ERD (Entity Relationship Diagram)

- A dataset schema

- Analytical business questions

You will be expected to write efficient, readable, optimized queries.

Must-Know SQL Concepts

You need to be comfortable with:

1. Joins

- Inner Join

- Left Join

- Right Join

- Full Join

- Cross Join

- Anti Join

You must understand cardinality and how join conditions affect row counts.

2. Window Functions

If you don’t know these yet, start today:

- RANK()

- DENSE_RANK()

- ROW_NUMBER()

- LEAD()

- LAG()

Window functions separate average SQL users from strong engineers.

3. CTEs and Subqueries

Structure matters. Clean SQL is readable SQL.

4. Table Relationships

Understand:

- One-to-one

- One-to-many

- Many-to-many

And how those relationships affect data modeling and joins.

How to Practice for Free

- SQLBolt

- LeetCode SQL 50 (basic → advanced)

- Mode Analytics SQL tutorials

SQL is not optional. It is foundational.

Skill #2: Python — Your Pipeline Builder

While SQL handles data manipulation inside databases, Python is how you build real-world data pipelines.

In 2026, Python remains the dominant language in data engineering.

What You Must Know

1. Core Data Structures

- Lists

- Tuples

- Sets

- Dictionaries

You must understand time complexity of operations.

2. Object-Oriented Programming

You will often wrap pipeline logic into reusable classes. Know:

- Classes

- Inheritance

- Composition

- Iterators

- Generators

3. File Handling and APIs

- JSON parsing

- CSV handling

- REST API consumption

4. Basic Data Libraries

- Pandas

- Requests

Even if large-scale systems use Spark or distributed tools, understanding data manipulation in Python is crucial.

Free Resources

- Python official docs

- Real Python tutorials

- FreeCodeCamp Python course

Skill #3: Data Structures & Algorithms (DSA)

Yes, it is still here.

Data engineering is a subset of software engineering. You will likely face at least one DSA round.

The good news?

DSA for data engineering is usually less intense than backend roles.

Focus Areas

- Arrays and Strings

- Hash Maps

- Sliding Window

- Two Pointers

- Basic Graph Traversal

- Sorting & Searching

What to Practice

- LeetCode 150 list

- Easy to Medium problems

- Focus on clarity and communication

You are not expected to solve hardcore competitive programming questions, but you must show problem-solving ability.

Skill #4: Data Modeling — Where Juniors and Seniors Separate

Data modeling is often what differentiates junior engineers from senior ones.

You must understand:

Dimensional Modeling

- Fact tables

- Dimension tables

- Star schema

- Snowflake schema

Slowly Changing Dimensions (SCD)

Know:

- Type 1

- Type 2

- Type 3

Be able to explain when to use each.

OLTP vs OLAP

OLTP (Transactional)

- Row-based storage

- Optimized for writes

- High concurrency

OLAP (Analytical)

- Columnar storage

- Optimized for reads

- Aggregations

Understand why columnar storage improves analytical query performance.

Storage Formats

- Parquet

- ORC

- Avro

Know why columnar formats are preferred for analytics.

If you are a fresher, everything up to this point is gold for interviews.

From here onward, we are stepping into more experienced-level territory.

Skill #5: Data Pipelines — The Core of the Job

This is your bread and butter.

If you are an experienced engineer, expect at least one pipeline design question.

You must understand:

ETL vs ELT

Why the industry shifted toward ELT with tools like:

- dbt

- Snowflake

- BigQuery

Reliability

Answer these in interviews:

- What happens if the pipeline fails?

- How do you backfill missing data?

- How do you ensure idempotency?

- How do you test data quality?

Idempotency

A pipeline that can be rerun without corrupting data.

Using this term correctly in interviews makes a strong impression.

Workflow Orchestration

Know:

- Airflow DAGs

- Task dependencies

- Retry logic

Skill #6: Distributed Systems

You do not need to build Spark from scratch.

But you must understand:

- How Spark distributes computation

- Driver vs Executors

- Partitioning

- Shuffle

Understand parallel processing fundamentals.

Distributed systems knowledge shows architectural maturity.

Skill #7: Event Processing & Real-Time Systems

The world is moving from batch to real-time.

Think about:

- Social media feed recommendations

- Fraud detection

- Live dashboards

These are real-time pipelines.

Tools to Understand

- Kafka

- Flink

- Spark Streaming

Know:

- Producers

- Consumers

- Partitions

- Offsets

Be able to explain when to use batch vs streaming.

Skill #8: System Design — The Boss Fight

System design interviews are subjective.

There is no single correct answer.

You might be asked:

- Design a clickstream tracking system

- Replicate production DB to warehouse

- Design a recommendation logging system

You Should Discuss

- Data ingestion

- CDC (Change Data Capture)

- Caching

- Load balancing

- API gateways

- Storage

- Monitoring

Understanding Docker and Kubernetes also helps here.

Communication clarity matters more than memorized answers.

Skill #9: Business Thinking

You are not just a coder.

You must understand impact.

You might be asked:

- How do you measure product success?

- What metrics would you design?

- How did your past project improve business?

Use the STAR method:

- Situation

- Task

- Action

- Result

Data engineering is not about moving data. It is about enabling decisions.

Skill #10: Cloud Computing

In 2026, if you do not know cloud, you do not know data engineering.

You do not need certifications.

Pick one:

- AWS

- GCP

- Azure

Understand:

Storage

- S3 / GCS

Compute

- EC2

- Lambda

Databases

- RDS

- DynamoDB

Warehousing

- Redshift

- Snowflake

Big Data

- EMR

- Kinesis

- Managed Kafka

Cloud-native architecture is the industry standard now.

Freshers vs Experienced Engineers

If You Are a Fresher:

Focus heavily on:

- SQL

- Python

- DSA

- Data Modeling basics

If You Are Experienced:

You must additionally master:

- Pipeline design

- Distributed systems

- Real-time systems

- System design

- Cloud architecture

- Business thinking

Final Thoughts

Data engineering is a massive field.

The number of tools can feel overwhelming.

But here is the truth:

You do not need to master every tool.

You need to master the fundamentals.

Focus on:

- Why an architecture works

- Trade-offs

- Scalability

- Reliability

- Business value

Every expert started where you are now.

Staring at a long list of tools.

Feeling overwhelmed.

But tools change. Fundamentals do not.

Master these 10 skills, practice consistently, build projects, and 2026 can absolutely be your year.

The opportunity is real.

The bar is higher.

But so is the reward.

Data Engineering Career & Salary FAQs (2026)

1. Is data engineering a good career in 2026?

Yes. Data engineering remains one of the most future-proof and high-paying careers in 2026. With companies relying heavily on data-driven decision-making, the demand for scalable, reliable, and real-time data systems continues to grow. Organizations are investing more in infrastructure than ever before, making skilled data engineers essential to modern businesses.

2. Is data engineering future-proof in 2026?

Data engineering is highly future-proof because data volume is increasing exponentially. Even with AI automation, companies still need engineers who can architect, scale, and optimize pipelines. Tools may change, but the fundamentals of distributed systems, cloud computing, and data modeling remain relevant.

3. What is the salary of a data engineer in 2026?

Salaries vary by location and experience. In the United States, senior data engineers earn between $147,000 and $183,000 annually, with elite companies paying significantly more. In India, compensation scales sharply with experience, especially in top-tier companies.

4. Why are data engineering salaries so high?

Data engineers build the backbone of analytics and AI systems. Without clean, reliable, and scalable data infrastructure, businesses cannot operate efficiently. Their ability to design distributed systems and manage cloud infrastructure makes them high-impact contributors.

5. Is data engineering better than data science in 2026?

Both are strong careers, but data engineering offers more stability in 2026 due to infrastructure demand. While AI models evolve quickly, the need for robust data pipelines and systems remains constant.

6. Is data engineering harder than software engineering?

Data engineering is a subset of software engineering. It requires programming skills plus knowledge of distributed systems, databases, and cloud architecture. The complexity lies in system design and scalability rather than frontend or UI logic.

7. Can freshers become data engineers in 2026?

Yes. Freshers can enter data engineering by mastering SQL, Python, basic DSA, and data modeling fundamentals. Entry-level roles focus heavily on query writing and data transformation.

8. How competitive are data engineering interviews in 2026?

Very competitive. With AI-assisted tools available, companies expect deeper architectural knowledge rather than just coding ability. Interview bars are higher, especially for mid-level and senior roles.

9. How long does it take to become job-ready in data engineering?

With focused learning, 6–12 months of consistent practice in SQL, Python, and data modeling can make you interview-ready. Experienced developers transitioning may need less time.

10. Can I learn data engineering for free?

Yes. Many free resources exist for SQL, Python, cloud basics, and system design. Fundamentals matter more than expensive certifications.

SQL & Database FAQs

11. Why is SQL important for data engineers?

SQL is the foundation of data engineering. Almost all warehouses and relational databases rely on SQL. Interview rounds heavily test joins, aggregations, and window functions.

12. Is SQL enough to get a data engineering job?

For entry-level roles, strong SQL skills can open doors. However, mid-level and senior roles require Python, pipeline knowledge, cloud computing, and system design expertise.

13. What SQL topics are asked in data engineering interviews?

Common topics include joins, window functions (RANK, LEAD, LAG), CTEs, subqueries, group by, indexing, and query optimization.

14. Are window functions important in 2026 interviews?

Yes. Window functions separate average candidates from strong ones. Many analytical interview questions rely on ranking, running totals, and partition logic.

15. How can I practice SQL effectively?

Practice using structured platforms with real-world schemas. Focus on solving business-oriented queries rather than memorizing syntax.

Python & Programming FAQs

16. Is Python mandatory for data engineering?

Yes. Python is widely used for building pipelines, automation, API integrations, and data transformations.

17. What Python skills should a data engineer know?

You should understand data structures (lists, dictionaries, sets), OOP concepts, file handling, API calls, and libraries like Pandas.

18. Do data engineers need object-oriented programming?

Yes. Pipelines are often structured as reusable classes. OOP ensures maintainable and modular code.

19. How important is DSA for data engineering?

You will likely face at least one DSA round. Focus on arrays, hash maps, sorting, and basic graph traversal. Extreme competitive programming is usually unnecessary.

20. Are LeetCode questions asked in data engineering interviews?

Yes, especially easy-to-medium problems focused on logic and clarity rather than deep algorithmic complexity.

Data Modeling & Warehousing FAQs

21. What is data modeling in data engineering?

Data modeling defines how data is structured inside warehouses. It involves fact tables, dimension tables, and schema design.

22. What is dimensional modeling?

Dimensional modeling organizes data into facts and dimensions for analytical queries, typically using star or snowflake schemas.

23. What are Slowly Changing Dimensions (SCD)?

SCDs manage changes in dimension data over time. Type 1 overwrites data, Type 2 preserves history, and Type 3 stores limited history.

24. What is the difference between OLTP and OLAP?

OLTP systems handle transactional workloads with row-based storage. OLAP systems handle analytical queries using columnar storage optimized for reads.

25. Why is columnar storage better for analytics?

Columnar storage reads only required columns during queries, reducing I/O and improving aggregation performance.

Data Pipelines & Architecture FAQs

26. What is a data pipeline?

A data pipeline is a system that extracts, transforms, and loads data from sources to destinations such as warehouses.

27. What is the difference between ETL and ELT?

ETL transforms data before loading into a warehouse. ELT loads raw data first and transforms inside the warehouse. Modern systems favor ELT.

28. What is idempotency in pipelines?

An idempotent pipeline can run multiple times without corrupting data or creating duplicates.

29. How do you handle pipeline failures?

Use retry logic, monitoring, alerting, and backfilling strategies to recover missing data.

30. What is workflow orchestration?

Workflow orchestration tools manage task dependencies, scheduling, and retries in data pipelines.

Distributed Systems & Real-Time FAQs

31. Why do data engineers need distributed systems knowledge?

Large-scale data cannot be processed on a single machine. Understanding partitioning, parallelism, and cluster computing is crucial.

32. What is Apache Spark used for?

Spark processes large datasets in distributed environments for batch and near-real-time analytics.

33. What is event processing?

Event processing handles real-time data streams, such as user clicks or transactions.

34. What is the difference between batch and streaming processing?

Batch processes data in scheduled intervals. Streaming processes data continuously in real time.

35. When should you use streaming over batch?

Use streaming for low-latency use cases like fraud detection or live recommendations.

System Design & Cloud FAQs

36. What is asked in data engineering system design interviews?

Candidates may be asked to design clickstream systems, data replication pipelines, or warehouse ingestion architectures.

37. Do data engineers need to learn Docker and Kubernetes?

Understanding containers helps in deploying scalable, reliable data services.

38. Why is cloud computing essential in 2026?

Most data systems run on cloud platforms. Knowing cloud storage, compute, and managed services is mandatory.

39. Which cloud platform should I learn for data engineering?

Choose one: AWS, GCP, or Azure. Master core services like storage, compute, warehousing, and streaming tools.

40. What is the most important skill for data engineers in 2026?

Fundamentals. SQL, system thinking, scalability, reliability, and business impact understanding matter more than memorizing tools.